My team recently carried out an online survey to gauge the views of users on their experience of a digital service we built. Included in the survey was an open question to give users an opportunity to provide any comments. We received 174 comments, some of which are shown below.

Task

A good mixture of positive and negative comments were sent. I needed to group them in a logical order to make it easier for team members to analyse.

Manually sorting these comments would have been an onerous task. The team wasn’t after in-depth categorisations but a broad insight into users’ opinions.

Sentiment analysis

I decided the quickest way to achieve this was through a sentiment analysis tool. Sentiment analysis identifies whether a piece of text is positive, negative or neutral.

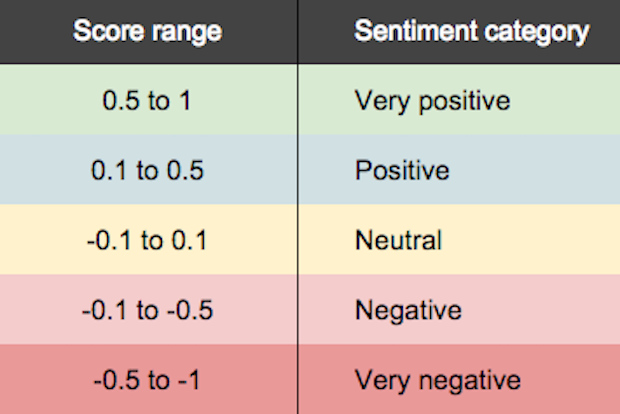

There are a variety of online tools available which use machine learning to determine the ‘view’ based on the words used. These tools assign a number between 1 and -1 for each statement. A value of 1 represents a strongly positive sentiment, 0 is neutral and -1 is the most negative. Single positive or negative words will score quite high.

Sentiment analysis is not an exact science, the nature of machine learning means that some comments will be incorrectly categorised.

The Repustate tool

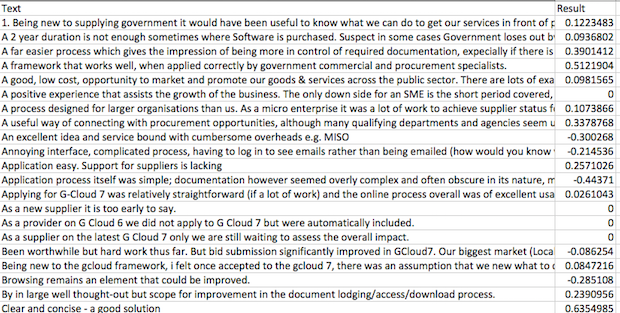

I used a free tool developed by Repustate. I pasted the text to be analysed into an Excel spreadsheet they provided, then sent it to them. They emailed the data back to me with a sentiment score for each comment added in an adjoining column.

Sense checking the scoring

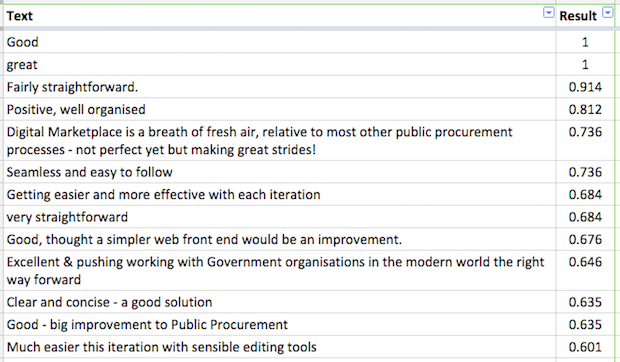

The sentiment scores the tool assigned were generally correct. For example, this comment received a score of 0.684:

“Getting easier and more effective with each iteration”

This comment was classified as being neutral:

“As a new supplier it is too early to say”

The tool identified this comment as being negative (-0.514):

“Very clunky if/when we need to make changes to service definitions or lower prices”

But some of the scoring didn’t quite get the sentiment correct. For example, this comment got a neutral score even though it was positive.

“Substantially improved application process over previous years”

Cleaning the data

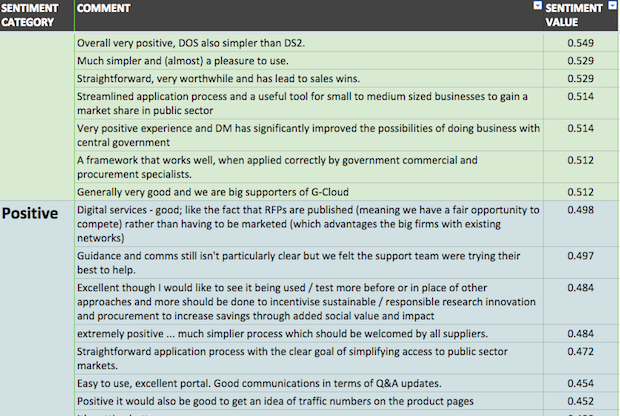

I copied the data into a Google Sheet, formatted the sentiment score to 3 decimal places and then ordered the comments in descending order. This is what the top rows looked like:

To group comments together I colour-coded them using these range values and categories:

I then added suitable headers to complete the spreadsheet.

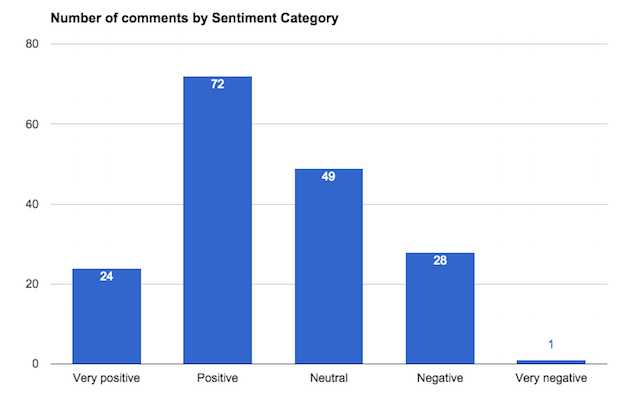

Grouping the data in this way also made it easy to chart the data by the sentiment category.

How it’s helped us

Grouping the comments using these methods provided the team with a quick and easy way to understand users’ opinions. The feedback is helping us to improve our processes.

We’ll keep experimenting with different tools and will continue to share our experiences. If you’ve analysed comments we’d love to hear about it in the comments below.

Ashraf Chohan is a senior performance analyst in GDS.

2 comments

Comment by Ashraf posted on

Thanks Ange

As mentioned above, this method of analysis isn't perfect but useful in obtaining a general summary of the emotions expressed. Over time I expect the accuracy of these tools to get better as they become more sophisticated.

Comment by Ange Moore posted on

Interesting how you see “Substantially improved application process over previous years” as being a positive comment - I think I agree with the neutral scoring that it received - it does say that things are better, but that doesn't automatically mean that they are good, perhaps just not as bad as before (e.g. improvement on what might have been absolutely awful, and has now made it to just not very good). I think this highlights how difficult it really is to assess something on a non-emotive basis - and where machines may be more accurate than we'd perhaps like to think.